Beware, sharks

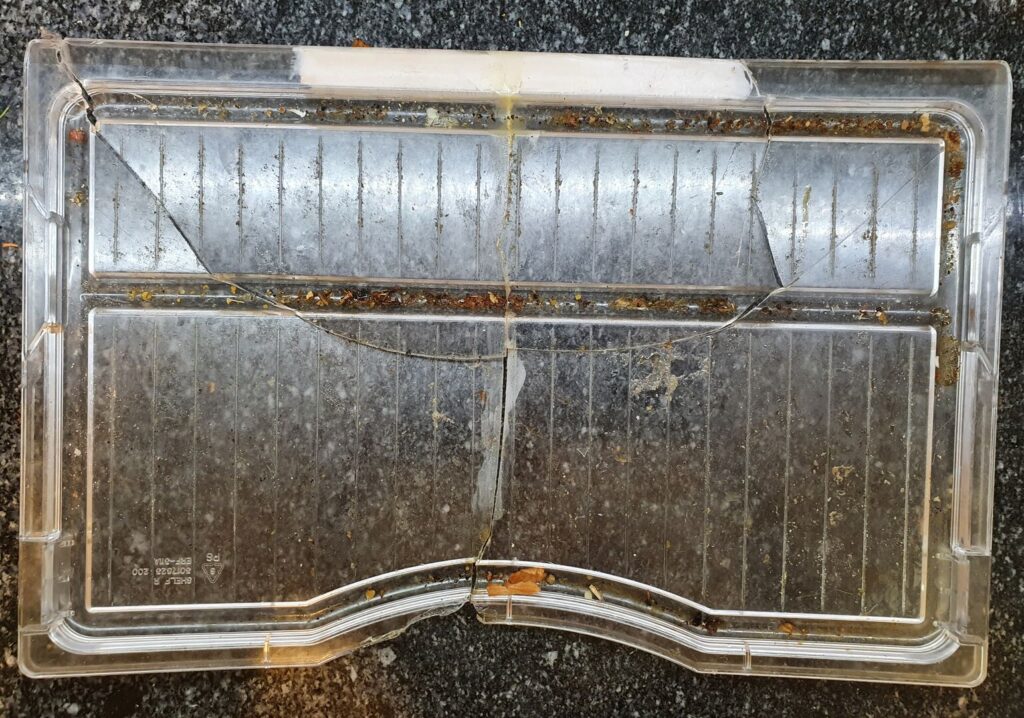

It all started with this:

It’s a relatively innocuous photo. But it cost me the best part of a day. How could that be? I practically hear you demanding of me. Well, let’s pretend we’re Holmes and Watson here.

Holmes: What do you deduce from this?

Watson: It is a photograph of, I believe, a broken shelf. From a refrigerator, perhaps? The break tells me the owner has had some misfortune. There is debris, which leads me to believe that the owner is also not fastidious. I see older cracks, which tells me this is not the first time the refrigerator shelf — if indeed that is what this is — has broken.

Holmes: Good, but what else?

Watson: Well, there is a mark indicating the type of plastic… The surface it is on seems to be some kind of granite or grant effect worktop… I, er… Oh! The person must have taken that photo so as to show it to someone?

Homes: Exactly-

We’ll leave behind the framing device there, as it gets us to the point and it turns out it was less funny than I thought: I wanted to post a photo somewhere. How could that possibly go wrong?

How Do You Get Files Off Your Phone?

I took the photo of the shelf because, as Watson said, I wanted to show it to someone (actually, post it to a QA on suitable plastic for a fridge shelf), which isn’t a controversial thing to want to do with a phone camera. Except it’s weirdly inconvenient to get a file off your phone and on to your computer.

? Exercise 1: Find a group of computer nerds (IRC channel). Ask them “what is the best way to get a file off my phone and on to my computer”. Record the amount of time it takes for the discussion to get back on topic.

(editor’s note: please don’t troll IRC channels)

For reference, the way I’ve been doing it hitherto is to use the “share” feature to upload the file to Google Drive, and download it from there. Not overly faffy, compared to, say, transferring the file over USB, which clocks in around 8 steps.

Now, because Google changed how they calculate photo storage —

— a couple of years ago, and because they had said they were going to discontinue Google Apps For Your Domain (now… Workspaces? Something like that), I needed something else to back up the photos on my phone before I travelled. So I went with Syncthing at the suggestion of ksyme99, which I had used about six or so years ago to synchronise Europa Universalis IV save games back when they were dog slow to transfer and desynchs were common.

If this seems like a case of “I wore an onion on my belt, which was the style at the time”, please bear with me as almost all of this has a bearing on the outcome.

The Point of Derailment

I checked my phone, and saw Syncthing wasn’t sending to the backup server. Odd, but it had been behaving a little strangely of late- byobu (a terminal multiplexer) wasn’t responding, other commands seemed to hang, that sort of thing. Rather than dig deeper and investigate, I rebooted the DomU (Xen term for VM guest). Bad move.

I gave the DomU twenty seconds or so to come back up, more than enough time given it’s pretty barebones. ssh wouldn’t connect, so I pinged the machine. That seemed to work (although I pinged by hostname and it replied with the wrong hostname, but correct IP) and since I was getting a response, I tried ssh again. Connection refused. Err? I ssh’d to the Dom0 (host/hypervisor) and pulled up the console; systemd was waiting on a stop job for my user, with a notional timeout. I’ve seen this occasionally on my main machine; I think it’s where a process refuses to be killed and holds up the entire shutdown/reboot progress, in this case it was probably the weirdly-behaving byobu session. No matter, I destroy‘d the DomU. So far, so relatively inconsequential.

I create‘d the DomU guest and tried to ssh in. “No route to host”, was the answer. Uh? Oh, the ping I had left running from before was also reporting the same thing. Not good. I checked the running Xen DomU’s on the host, and sure enough there were only two. Oh dear. I tried create-ing the DomU again but attaching to the console on creation. It seemed fine- the pygrub bootloader came up, but shortly after that it mysteriously died, complaining about things like unable to get domain type for domid, and unable to exec console client: No such file or directory. Searches for those error messages gave some Xen-related results which I looked through hoping to find a commonality, but there was nothing particularly helpful.

Digging

Given that neither the messages themselves, not the search results associated with them were terribly helpful, I tried getting more info from Xen. As a hypervisor, it has access to the entire state of the guest, so it should make that information obvious, right?

Well…

In /var/log/xen/ there were nearly 300 different log files. I grep‘d for recent ones as I can never quite remember the option to sort ls output by date (ed: ls -t / ls -tr) and saw ones related to both the bootloader (aha!) and the DomU in question (ahaa!).

Checking the bootloader first, I found:

Using <class 'grub.GrubConf.Grub2ConfigFile'> to parse /boot/grub/grub.cfg

WARNING:root:Unknown directive /boot/grub/grub.cfg

ESC(BESC)0ESC[1;24rESC[m^OESC[?7hESC[?1hESC=ESC[HESC[JESC[?1hESC=

pyGRUB version 0.6

ESC[0m^Nlqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqk^O

ESC[0m^Nx^O ESC[0;7m^OArch GNU/Linux, with Linux core repo kernel ESC[m^O ESC[0

m^Nx^O

ESC[0m^Nx^OESC[72CESC[0m^Nx^O

ESC[0m^Nx^OESC[72CESC[0m^Nx^O

ESC[0m^Nx^OESC[72CESC[0m^Nx^O

ESC[0m^Nx^OESC[72CESC[0m^Nx^O

ESC[0m^Nx^OESC[72CESC[0m^Nx^O

ESC[0m^Nx^OESC[72CESC[0m^Nx^O

ESC[0m^Nx^OESC[72CESC[0m^Nx^O

ESC[0m^Nmqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqj^O

ESC[70DESC[0m^N^OUse the ESC[0m^N^^O and ESC[0m^Nv^O keys to select which entry is highlighted.

ESC[58DPress enter to boot the selected OS, 'e' to edit the

ESC[52Dcommands before booting, 'a' to modify the kernel arguments

ESC[59Dbefore booting, or 'c' for a command line.ESC[12AESC[26CESC[18BESC[68DESC[?1lESC>ESC[24;1H^MESC[?1l

ESC>

bootloader.17.log (END)which seems to be verbatim output from the bootloader, complete with control characters.

Perhaps the DomU log would be more helpful?

Waiting for domain pandora (domid 12) to die [pid 21297]

Domain 12 has shut down, reason code 3 0x3

Action for shutdown reason code 3 is preserve

Done. Exiting now

xl-pandora.log.5 (END)Er, not really. Reason code 3 / 0x3 is ‘crash’, apparently, but that didn’t tell me much.

I had a look for ways of getting more info, but the FAQ didn’t tell me much; nor ‘common problems’; the beginner’s guide; nor options in a config file. I saw references to xl dmesg, which only had repeated warnings:

(XEN) CPU0: Running in modulated clock mode

(XEN) CPU1: Running in modulated clock mode

(XEN) CPU2: Running in modulated clock mode

(XEN) CPU3: Temperature above threshold

(XEN) CPU3: Running in modulated clock mode

(XEN) CPU1: Temperature above threshold

(XEN) CPU1: Running in modulated clock mode

(XEN) CPU0: Temperature above threshold

(XEN) CPU0: Running in modulated clock mode

(XEN) CPU2: Temperature above threshold

(XEN) CPU2: Running in modulated clock mode

(XEN) CPU3: Temperature above threshold

(XEN) CPU1: Temperature above threshold

(XEN) CPU3: Running in modulated clock mode

(XEN) CPU1: Running in modulated clock mode

(XEN) CPU0: Temperature above threshold

(XEN) CPU0: Running in modulated clock mode

(XEN) CPU3: Temperature above threshold

(XEN) CPU3: Running in modulated clock mode

Temperature warnings are Not Real Good™, but that’s separate to the problem I was facing.

And checking xl itself, it had a verbose option (-v), but it printed oddly, like newlines were missing, and from what I could see didn’t have any relevant information.

Seeking Help

I jumped into #xen after joining OFTC and made my plea:

<bertieb> Hi folks, I have a DomU which was running for ~150 days failing to come up on 'xl create' (dom0 running xen 4.8 I think); it complains "unable to get domain type" / "unable to exec console client: No such file or directory"; pygrub bootloader shows up with the appropriate entry but shortly after selecting it the domU exits with those errors- how can I get more info?I was asked for xl -vvv create output, which I supplied. I was then asked for xl dmesg output, and andyhhp had the key suggestion:

<andyhhp> setting on_crash="preserve" should keep the VM around after it crashesI had missed this when looking over the configuration file reference, as it’s ‘buried’ (kinda) with the other options of the on_... states (destroy,reboot,etc). It’s a really, really useful setting; so much so I called it out in its own post.

The Culprit

With the DomU state preserved, it was possible to see what was causing the crash:

[ 0.137932] xen:manage: Unable to read sysrq code in control/sysrq

[ 0.138936] dmi: Firmware registration failed.

[ 0.151748] Initramfs unpacking failed: junk in compressed archive

[ 0.166545] Kernel panic - not syncing: VFS: Unable to mount root fs on unknown-block(0,0)

[ 0.166556] CPU: 0 PID: 1 Comm: swapper/0 Not tainted 4.11.7-1-ARCH #1

[ 0.166563] Call Trace:

[ 0.166572] dump_stack+0x63/0x81

[ 0.166579] panic+0xe4/0x22d

[ 0.166586] mount_block_root+0x27c/0x2c7

[ 0.166594] ? set_debug_rodata+0x12/0x12

[ 0.166599] mount_root+0x65/0x68

[ 0.166605] prepare_namespace+0x12f/0x167

[ 0.166610] kernel_init_freeable+0x1f6/0x20f

[ 0.166616] ? rest_init+0x90/0x90

[ 0.166621] kernel_init+0xe/0x100

[ 0.166626] ret_from_fork+0x2c/0x40

[ 0.166632] Kernel Offset: disabledThe important parts are: Initramfs unpacking failed: junk in compressed archive and the Kernel panic - not syncing: VFS: Unable to mount root fs on unknown-block(0,0). There’s a decent AskUbuntu QA on the latter. As the error message says, it can’t find the root filesystem. That was odd, since it could clearly see the root filesystem earlier in the day when it was actually running off that root filesystem. Even now, I could see the root filesystem! I was telling the machine “it’s right there! I can see it!”

As an aside, I had to do an extra step to mount that filesystem. The DomUs I have are LVM-backed, but they LVM logical volumes get passed to the DomU where they show up as a block device, which the DomU guest then puts a partition table on and adds a partition to. So mounting the lv directly causes complains of “bad superblock, incorrect magic” (or similar).

This can be worked around with kpartx, which will enumerate filesystems on partitioned logical volumes. In my case, I checked the volume using parted / fdisk and saw the partition started at 2048 blocks, blocks being 512 bytes in size.

My mount command was therefore:

mount -o ro,offset=$((512*2048)) /dev/vgraid6/pandora-disk /tmp/pandora/

I later mounted it rw to adjust grub.cfg

After poking around with some boot options, and toying with the idea of (and installing the build tools for) an alternative bootloader for the DomU, I was satisfied that the root filesystem wasn’t actually the problem. So I turned to the ‘junk’ causing the initramfs unpacking to fail. Maybe something had corrupted the initramfs image, maybe there was some other issue with it. I certainly was far from the only person to encounter the issue.

I figured I’d regenerate the initramfs. Referring back to the bible in such matters, the Arch Installation Guide, and its companion canon books, mkinitcpio and (most helpfully): configuring an Arch Xen DomU guest, I needed a live system to do so. I grabbed the latest Arch iso, made the requisite changes as described in the ‘configuring a DomU guest’, and… the DomU failed to boot.

xc: error: panic: xc_dom_bzimageloader.c:775: xc_dom_probe_bzimage_kernel: unknown compression format: Invalid kernel

xc: error: panic: xc_dom_core.c:702: xc_dom_find_loader: no loader found: Invalid kernel

libxl: error: libxl_dom.c:638:libxl__build_dom: xc_dom_parse_image failed: No such file or directory

libxl: error: libxl_create.c:1223:domcreate_rebuild_done: cannot (re-)build domain: -3Ohh, oops. I ran into this problem about 5 years ago- my version of Xen doesn’t like newer kernels. In this case it’s the compression format (xz). So I had to head to the Arch Linux Archive and grab an old old ISO. Once I did so, the DomU booted the Arch installer environment (yay!), and I was able to regenerate the initramfs. I tried booting that and it… didn’t work (boo).

Though time was wearing on, I felt close to the culprit. Maybe I had accidentally upgraded the kernel? I checked that I was still running an old kernel which Xen wouldn’t barf on: 4.11.7. Hmm, that’s definitely old. So I had a look through /var/log/pacman.log (it’s a trove of useful information, genuinely) and the pieces fell into place.

The Case

- Before travelling, I needed to back up photos on my phone

- To do this, decided to install Syncthing on my backup server

- On 2022-05-14 at 11:17, I ran a system upgrade (

pacman -Syu) - This did not upgrade the kernel, as I had (cleverly) pinned the version to avoid problems with it

- However, a new version of mkinitcpio was installed (as well as other applications and utilities)

- The initramfs was regenerated using a different compression format

- The old 4.11.7 kernel could not decompress this on boot

Putting it Back Together

I hopped into #archlinux with a plea from the edge of madness:

<bertieb> Awful question, but I'm deep in xkcd sharks territory here... say I wanted to stay on a particular kernel version; which packages would I have to also keep at that version to let the system keep booting?We figured out the compression format (zstd, which became the default a while back) was causing the issue, or at least contributing to it. Since I was pinned to an older kernel — remember, my Xen version doesn’t like newer kernels — it was unable to boot. I added COMPRESSION=cat to my /etc/mkinitcpio.conf and rebuilt the initramfs. With that I could successfully boot, some five-ish hours later.

Lessons and Take Away Points

There’s a few lessons that can be pulled out of the rubble of this experience, and getting something positive out of it would help me feel better about the time spent!

Do Things As Right As You Can First Time

There is well-meaning advice, which says “Do it right the first time”. It’s not bad advice, if you have the resources for that. But whether that’s time or money (or another resource, like knowledge), it’s universally pretty rare to have resources to do things perfectly or fully ‘right’ the first time.

Looking back, I could perhaps have used a different hypervisor to Xen; but I was migrating from another Xen host, and promptly getting services back up and running after migration was my priority at the time. When I discovered that later kernels wouldn’t boot on my version of Xen, I had a couple of options: fix the issue, or work around it. I chose the latter. I could have investigated, maybe updated Xen and hoped it wouldn’t break anything else. Instead I decided not to upgrade the kernel on that one particular guest, and I tried to protect myself from me in the future by pinning the version so that pacman wouldn’t upgrade the kernel. That’s less than ideal for other reasons, but it seemed like the right trade off to make at the time.

Asking For Help If You Need To

This is something I’ve gotten much better at doing than when I was a young kid. Obviously it’s important to know where to look things up oneself, both from the perspective of “be able to solve your own problems” and so as not to waste other folks’ time with trivial or easily-answered queries. In two cases here I was able to get to the root of a problem thanks to input from people with more experience and/or a different perspective.

Sometimes You Need to Shave a Yak

Despite best efforts, things will go wrong. Software will have bugs, hardware will fail, people will make mistakes. It is irksome to lose time to things, especially when they amount to yak shaving: investigating a non-functional DomU is not how I planned to spend my day; I started out intending to get a photo off my phone. But the best laid plans aft gang agley- sometimes you just have to roll with the punches.