From the Department of Wordy Titles

I have a set of tools that I have written to make interacting YouTube simpler, more straightforward, simplifying my workflow.

In the state it’s in it roughly looks like:

- record a bunch of videos

- upload the files and leave them in place

- run

genjsonon them to create a JSON template, including a reasonably-spaced publish schedule - run

get_idsto associate the JSON entries with the video’s YTvideoId - go through the videos, rewatch to decide on title, description and thumbnail frame and include this in the JSON entry

- run

uploadytfootageto update the metadata

Most of the above is highly automated- even step 2 could be done away with if the default YouTube API quota didn’t limit one to roughly six videos per day.

The most labour-intensive part of the process is step 5. Because of the batch nature of the job, sometimes quite a few videos can pile up. For example, at time of writing I have 45 Hunt: Showdown videos from the past ten days to do.

Getting a short, catchy yet descriptive title and description for each of those will involve reacquainting myself with what those round[s] entailed. So I decided recently that I would try to do some of that work as I go: between rounds of Hunt, write out a putative title and description associated with a video file to another JSON file.

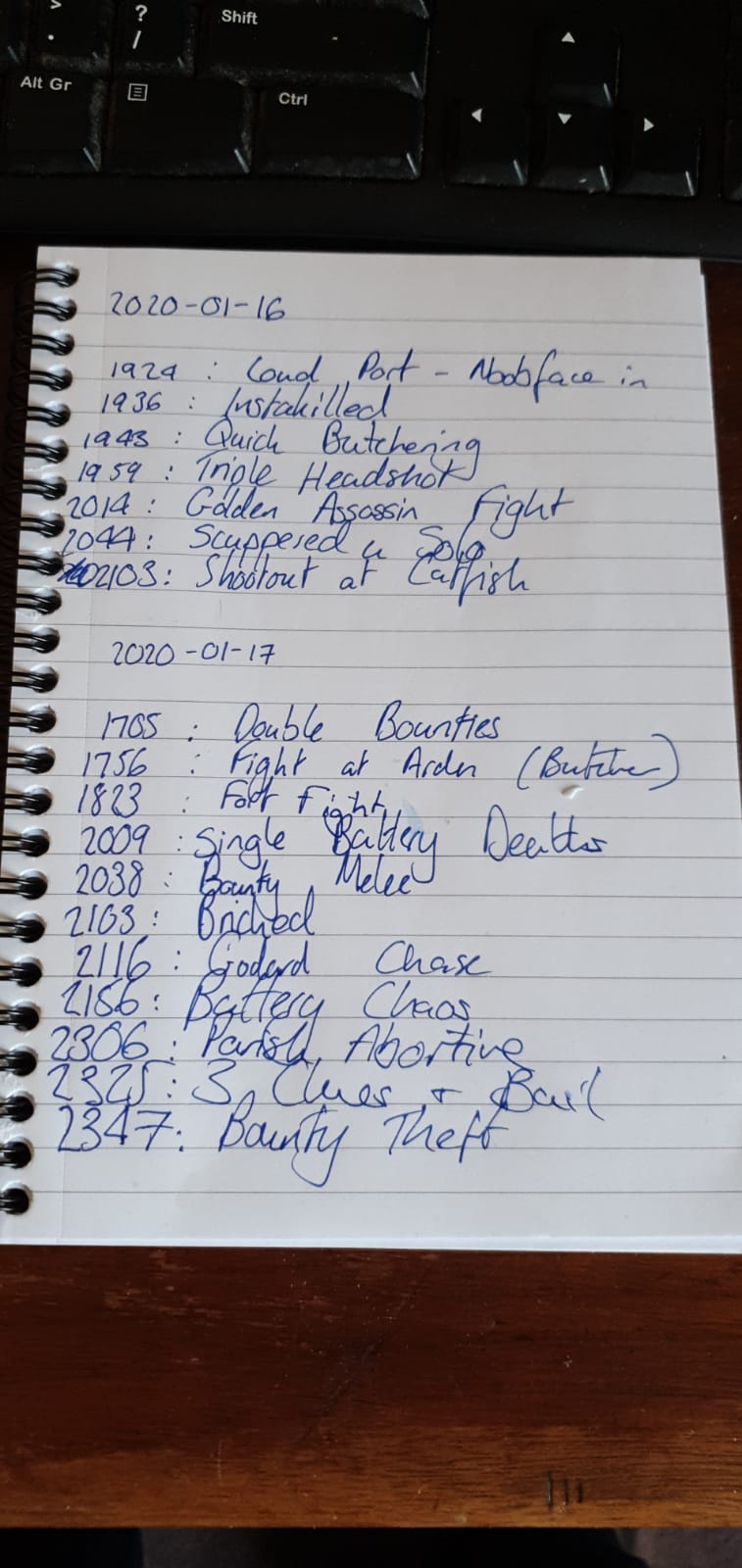

I also capture a short snippet or potential title on a notepad on my desk:

Between those hopefully the process will be a bit easier.

I also cooked up a short script to merge together the two JSON files. The crux of it is the filter that selects from the ‘contemporaneous note’ if it has an associated entry for a file in the generated JSON template list.

We are working with a list of dicts, so a list comprehension is handy. We want to select from the list of dicts an entire dict that matches the filename of the video. Roughly speaking:

next(item for item in json_c if item["file"] = filename)Docs: list comprehension, next()

SO example: Python list of dictionaries search

If I am able to keep on top of titles and descriptions as I go, the only thing needed will be to find a good thumbnail frame! (though that’s kinda time consuming in itself, perhaps ML could be applied to that…)

Edit: Yes! Deep neural net thumbnails and convolutional neural nets (PDF)