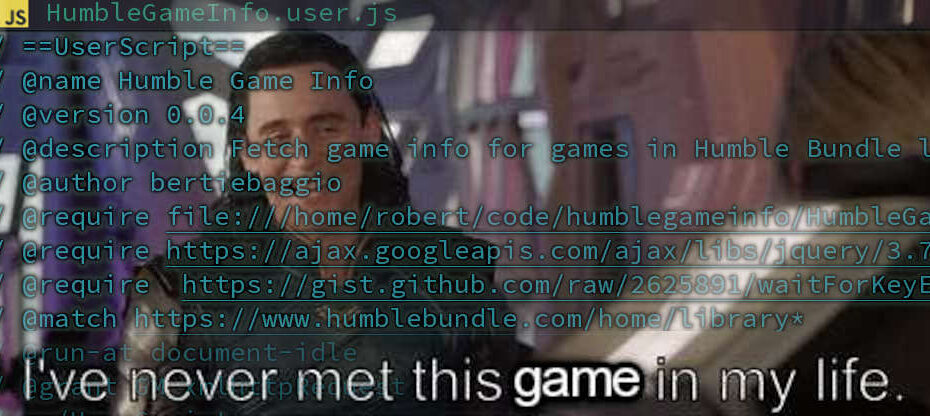

Humble Game Info Userscript: Concept and Starting From Scratch

These look interesting, tell me more Background Way back in the mists of time in 2010 something cool happened. A new kid on the block… Read More »Humble Game Info Userscript: Concept and Starting From Scratch